Tier‑1 Institutional Gap Analysis

What ministries, DFIs, climate funds, sovereign programmes, and auditors will look for after reading your homepage. This page makes the remaining institutional questions explicit so reviewers can see the next level of architecture, controls, and diligence posture they will expect.

Why this page matters

Your homepage already signals the governance spine clearly. Tier‑1 reviewers, however, will move immediately from signal to diligence. They will test whether the Hub can evidence lineage, reviewer controls, deployment isolation, sovereignty posture, assurance logic, and audit reconstruction with the level of clarity required by ministries, DFIs, climate funds, sovereign programmes, and auditors.

Schema-to-Narrative Connector

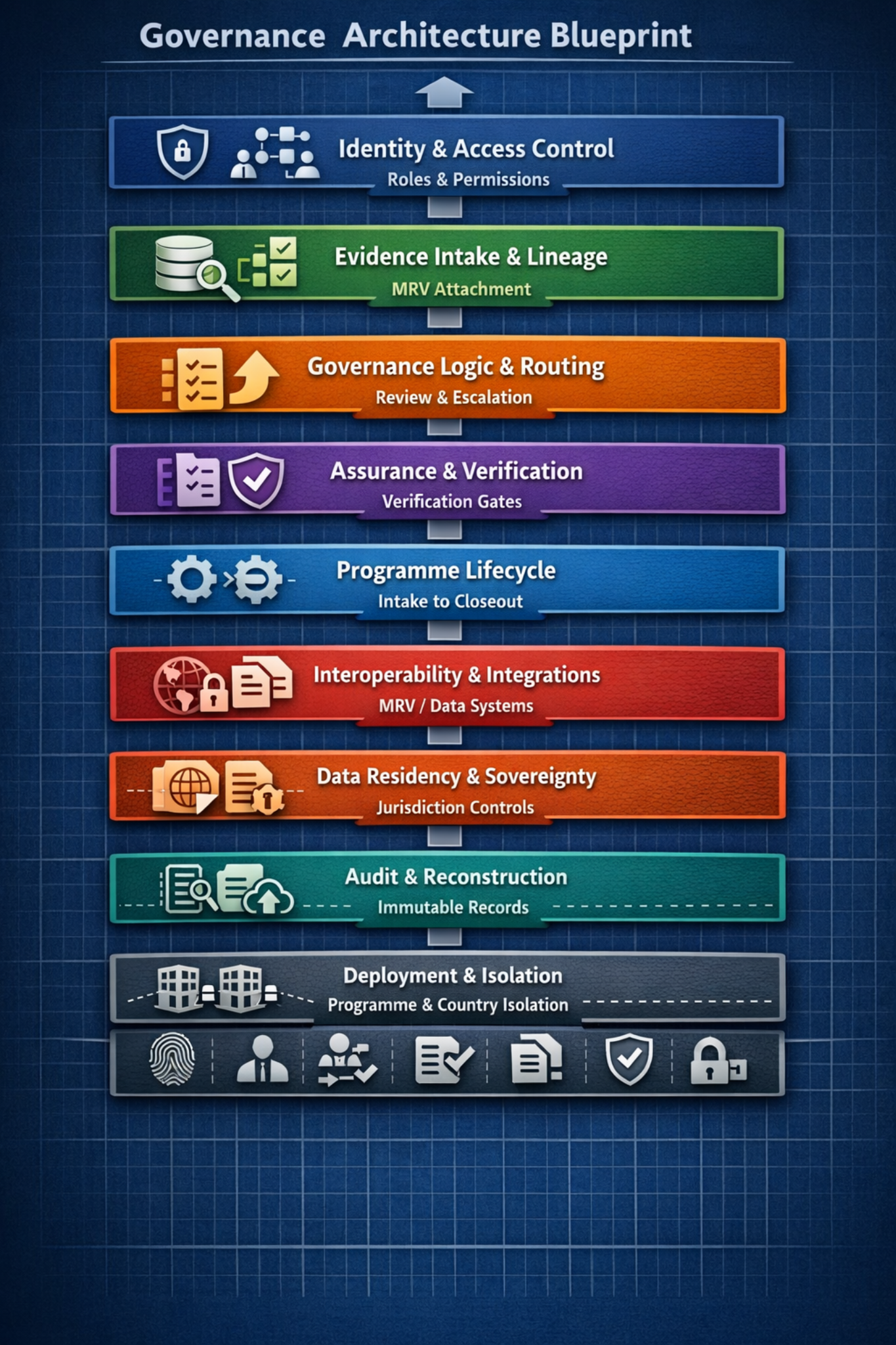

Each governance layer corresponds to a schema module — Identity, Evidence, Routing, Assurance, Audit — implemented in the RLS & Audit Schema.

This connects the public governance narrative to the technical control model reviewers will expect to see in diligence.

1. Evidence Lineage & MRV Attachment Logic

What reviewers will ask once they see the governance spine and routed example.

- How does evidence maintain lineage across revisions?

- How are MRV artefacts attached, versioned, and locked?

- What prevents evidence drift across multi‑year programmes?

- How does the Hub handle conflicting evidence or contested MRV inputs?

The homepage signals the logic but does not yet expose the full lineage model or MRV attachment schema.

Reviewers may assume gaps in auditability unless they see the deeper architecture.

Evidence objects remain tied to programme context, revision history, MRV attachment, reviewer status, and export posture so lineage can be reconstructed rather than inferred.

2. Role Model & Reviewer Controls

- a clear role hierarchy

- separation‑of‑duties logic

- override permissions

- escalation authority boundaries

- reviewer accountability safeguards

- how conflicts of interest are prevented or logged

The role model is implied but not explicitly documented.

DFIs and auditors will flag this as a governance‑control blind spot.

Reviewer actions are named, attributed, time-stamped, permission-bounded, and routed through defined escalation paths so discretion remains visible and accountable.

3. Multi‑Programme / Multi‑Country Deployment Logic

- How does the Hub isolate programmes, geographies, and institutions?

- How does cross‑programme evidence sharing work, or not work?

- How are jurisdictional constraints enforced at scale?

- How does the Hub prevent cross‑country data leakage?

Deployment models are referenced but not structurally explained.

Sovereign reviewers may hesitate without explicit isolation logic.

Deployment models isolate programmes, countries, institutions, access scopes, and export routes while preserving a common governance spine for oversight.

4. Data Residency, Export Control & Retention

- explicit residency options

- export‑control enforcement logic

- retention schedules

- deletion and archival posture

- cross‑border transfer constraints

- audit logs for export events

The posture is strong but lacks a dedicated, detailed page.

Reviewers may request a data‑sovereignty annex before proceeding.

Data sovereignty posture, residency options, retention rules, access policy governance, and export controls are documented as configurable institutional constraints.

5. Assurance & Verification Pathways

- How does the Hub support verification workflows?

- How are conditions validated or closed?

- How does the Hub prevent unverifiable evidence from advancing?

- What is the Hub’s relationship to external MRV systems?

Assurance logic is implied but not explicitly mapped.

Climate funds and auditors may see this as an assurance‑layer gap.

Evidence advances through verification gates, condition closure, reviewer attribution, MRV attachment, and export-readiness controls before it becomes committee-ready.

6. Programme Lifecycle Logic

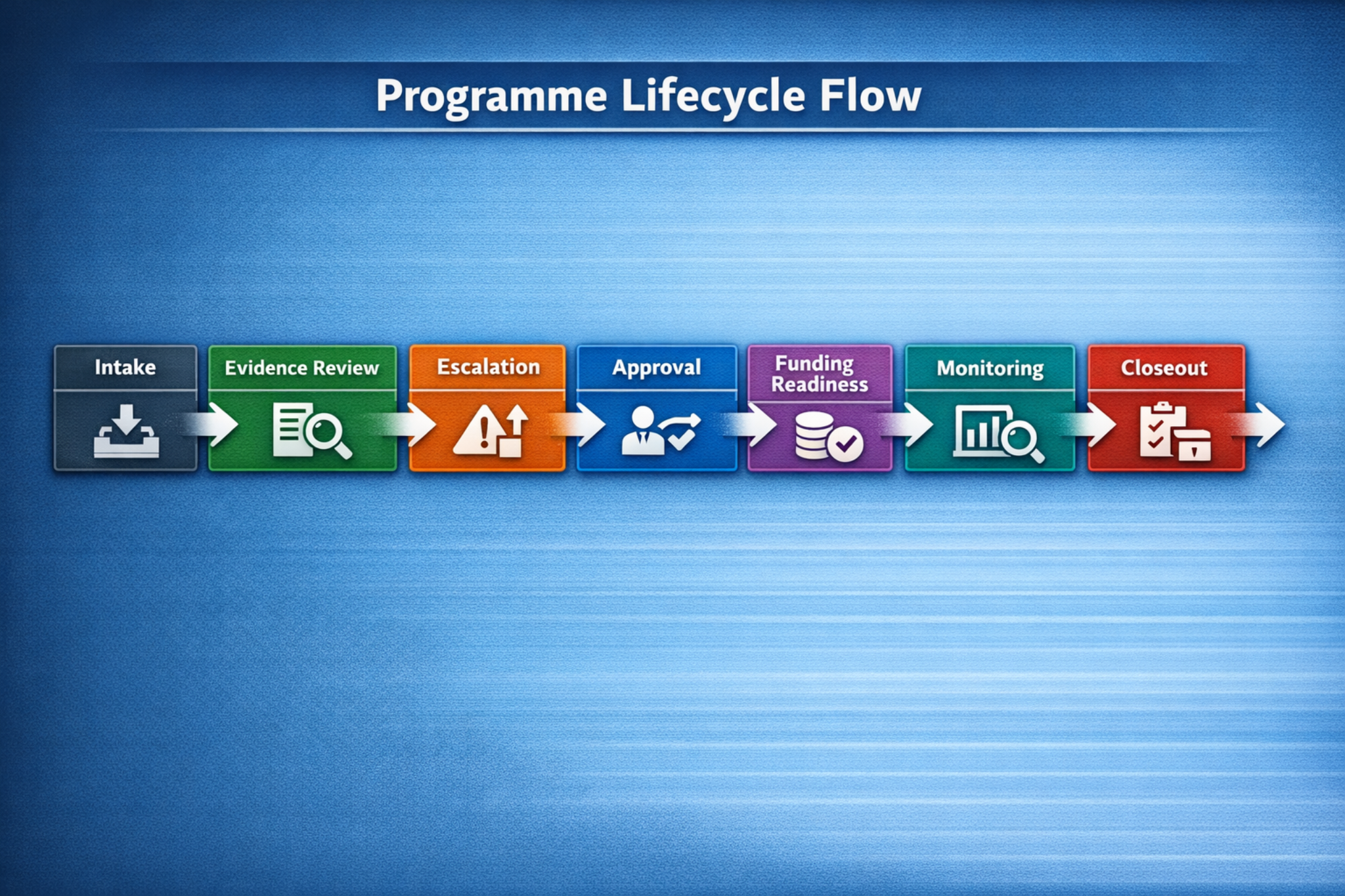

The homepage shows the governance spine, and the new Programme Lifecycle Flow now makes the lifecycle visible: intake → review → escalation → approval → funding readiness → monitoring → closeout.

Reviewer teams may still ask for lifecycle-specific controls, evidence requirements, and exception pathways by programme type.

DFIs can see that the Hub is not limited to early-stage governance; it covers the full lifecycle through closeout.

The Programme Lifecycle Flow now shows intake, evidence review, escalation, approval, funding readiness, monitoring, and closeout as one continuous governed sequence.

7. Interoperability & Integrations

- What systems does the Hub integrate with?

- How are integrations governed?

- How is data integrity preserved across integrations?

- What is the API posture?

No explicit interoperability narrative is present.

Reviewers may assume integration limitations.

Integrations are treated as governed data inputs with source attribution, integrity boundaries, MRV/data-system mapping, and no route around the governance spine.

8. Audit Reconstruction Guarantees

- reconstruction guarantees

- immutable logs

- tamper‑evident controls

- evidence lineage replay

- reviewer‑action replay

- export‑pack reconstruction

The homepage asserts auditability but does not show the underlying guarantees.

Auditors will request a reconstruction‑logic annex.

Audit schema, reviewer attribution, RLS enforcement, timestamps, conditions, overrides, and export events support replayable reconstruction of decisions and evidence lineage.

9. Procurement‑Ready Documentation

Tier‑1 institutions will expect a procurement‑safe technical overview, security posture summary, data‑sovereignty annex, governance‑architecture diagram, deployment‑model matrix, and risk‑assurance statement.

These exist conceptually but are not yet surfaced as a consolidated Institutional Pack.

Reviewers may question procurement readiness.

The Institutional Pack link is surfaced alongside public review pages and protected donor/committee materials so procurement teams can locate the core documents quickly.

10. Cross‑Sector Governance Logic

- How does the governance spine adapt across sectors?

- What remains constant versus sector‑specific?

- How does MRV differ across sectors?

A Cross‑Sector Governance Invariants section now clarifies what remains constant — identity, evidence lineage, routing, audit, and export posture — and what changes by sector, including MRV methodology, evidence type, thresholds, and pack format.

Sector-specific annexes can still be added for agriculture, mining, coastal and marine, blue governance, and sovereign programme deployments.

The site separates cross-sector invariants from sector-specific variables: the governance spine and audit logic remain constant while MRV methods and evidence types adapt.

Summary of Tier‑1 Gaps

| Category | What’s Missing | Why Tier‑1 Institutions Care |

|---|---|---|

| Evidence lineage | Full lineage and MRV attachment schema | Auditability and defensibility |

| Role model | Explicit roles, overrides, and escalation | Accountability and separation of duties |

| Deployment models | Multi‑programme, multi‑country isolation | Sovereign risk and data leakage |

| Data sovereignty | Detailed residency, export, and retention posture | Compliance and legal exposure |

| Assurance logic | Verification and condition‑closure pathways | MRV integrity |

| Lifecycle | Programme lifecycle flow now added; lifecycle-specific controls can be expanded by deployment | Funding governance clarity |

| Integrations | Interoperability posture | System compatibility |

| Audit guarantees | Immutable logs and replay logic | Fiduciary assurance |

| Procurement pack | Institutional Pack link added; full procurement pack can mature into a consolidated downloadable bundle | Procurement readiness |

| Cross‑sector logic | Invariants table added; sector-specific annexes can expand by deployment | Multi‑sector adoption |